Managed service providers rely on remote access to keep customer environments running. VPNs, jump hosts, and centralized access tools make it possible to manage infrastructure across dozens or hundreds of sites without leaving the operations center.

But during outages, these tools can become part of the problem. When remote access depends on the production network, even routine failures can cut off the access engineers need to fix issues. What should be a quick recovery turns into a prolonged outage that requires on-site intervention.

Here are some of the most common failure scenarios MSPs face, and a look at the architecture that helps overcome them.

Routing Failures

Many routing failures stem from human error. According to 2025 research from the Uptime Institute, almost 40% of organizations suffered a major outage due to human error in the last three years. If a core router experiences a misconfiguration, control-plane crash, or routing instability, the network paths that connect engineers to the environment may disappear entirely.

Common examples include:

- BGP route leaks or policy errors that remove upstream connectivity

- OSPF adjacency failures that break internal routing between segments

- VRF or VLAN misconfigurations that isolate management subnets

- Routing table corruption during firmware upgrades

In these situations, VPN sessions drop immediately because the path between the engineer and the VPN gateway no longer exists. Worse, the router responsible for the failure may be fully operational from a hardware perspective and all it needs is a configuration correction. But engineers can’t gain remote console access to make this correction.

What should have been a 30-second configuration rollback becomes a multi-hour recovery effort.

Firewall Policy Errors

Firewall misconfigurations are one of the most common causes of remote access loss. Modern firewalls enforce highly automated policies through orchestration systems, policy templates, or automated compliance updates. These systems are great for consistency, but they introduce new failure modes.

A few examples include:

- A security policy update accidentally blocking VPN management traffic

- A zone-based firewall rule preventing internal device access

- A NAT configuration error breaking inbound VPN connections

- An automated policy sync overwriting existing allow rules

A lot of times, the firewall itself remains online and functional. The only issue is a misconfigured rule. Because the firewall sits directly in the remote access path, it becomes unreachable (just like the router we mentioned in the previous example). Engineers may be able to confirm the outage through monitoring systems, but without access to the firewall CLI or console, there is no way to correct the configuration remotely.

WAN or ISP Outages

Many MSP environments rely on customer WAN circuits to provide remote management access. Failures on these circuits cut remote connectivity regardless of the health of the internal infrastructure. Fiber cuts, for example, are one of the most common causes of outages that last 48 hours or longer.

Common scenarios include:

- Carrier fiber cuts (looking at you, backhoe operators 😜)

- Last-mile circuit failures at branch locations

- ISP routing incidents causing upstream blackholing

- DDoS mitigation events that disrupt inbound traffic

Image: Behold, the natural predator of fiber cables.

Customer networks may still be operating internally. Devices are running, servers are responding, and monitoring systems might still be collecting metrics locally. But engineers outside the network have no path into the environment. Even simple recovery actions like restarting an edge router or verifying a routing table may require on-site access.

Authentication Infrastructure Failures

Jump host environments depend on centralized authentication systems such as Active Directory, LDAP directories, or identity federation platforms. When these go down, engineers get locked out of their own management infrastructure.

This can happen due to:

- Active Directory replication failures

- Expired domain controller certificates

- LDAP service crashes

- Identity provider outages affecting SSO login flows

Engineers can probably still reach the jump host in these scenarios, but they can’t log in because authentication fails. The result is the same: engineers can see the problem, but they can’t access the systems required to fix it.

DNS and Management Service Failures

Another subtle failure mode occurs when core infrastructure services degrade. Many management environments rely on DNS resolution, certificate validation, or internal service discovery mechanisms.

If DNS services fail or management service endpoints become unavailable:

- Jump hosts may not resolve device hostnames

- SSH connections fail due to certificate validation errors

- Automation platforms lose connectivity to managed infrastructure

The devices themselves may still be reachable, but the tools engineers rely on stop working.

The Pattern Behind These Failures

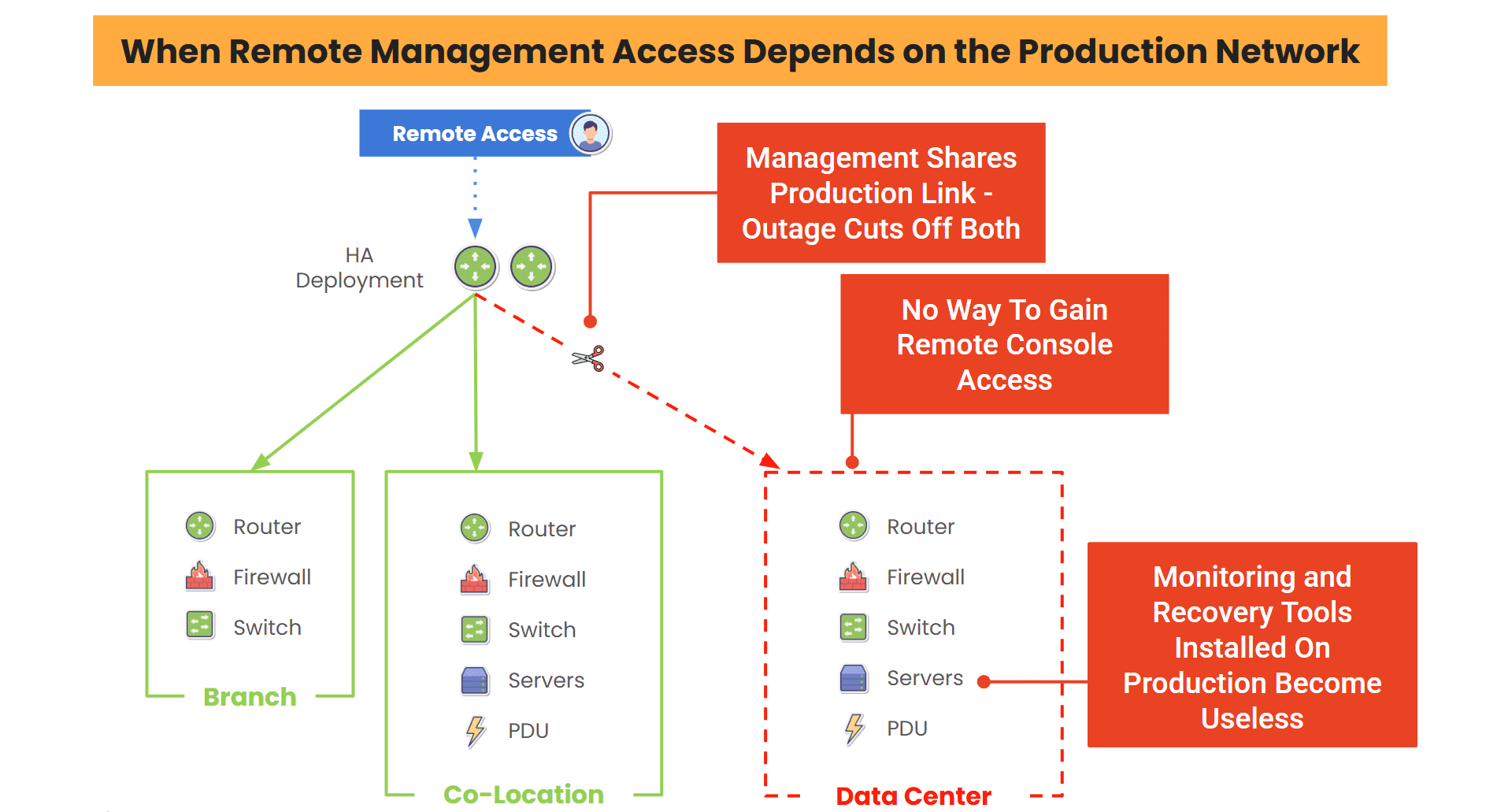

These scenarios might seem unrelated, but they all share the same root issue: remote access depends on the production network.

When that network fails, whether due to routing, security, WAN, or service issues, engineers lose the ability to reach the infrastructure they need to fix. That’s when recovery slows down, truck rolls and labor costs increase, and SLA risks rise.

Image: When remote management access depends on the production network, outages cut off both links, leaving engineers unable to remotely recover.

What should be routine incidents turn into operational disruptions. Engineers are unable to gain remote console access for recovery, and any tools running on the production network become useless. The only way to bring the network back online is to put engineers on site.

How To Overcome The Top Network Failure Scenarios

VPNs and jump hosts are effective, and they’re useful tools for day-to-day operations. But, MSPs won’t be able to overcome these top network failure scenarios if they rely on VPNs and jump hosts as the only path to critical infrastructure.

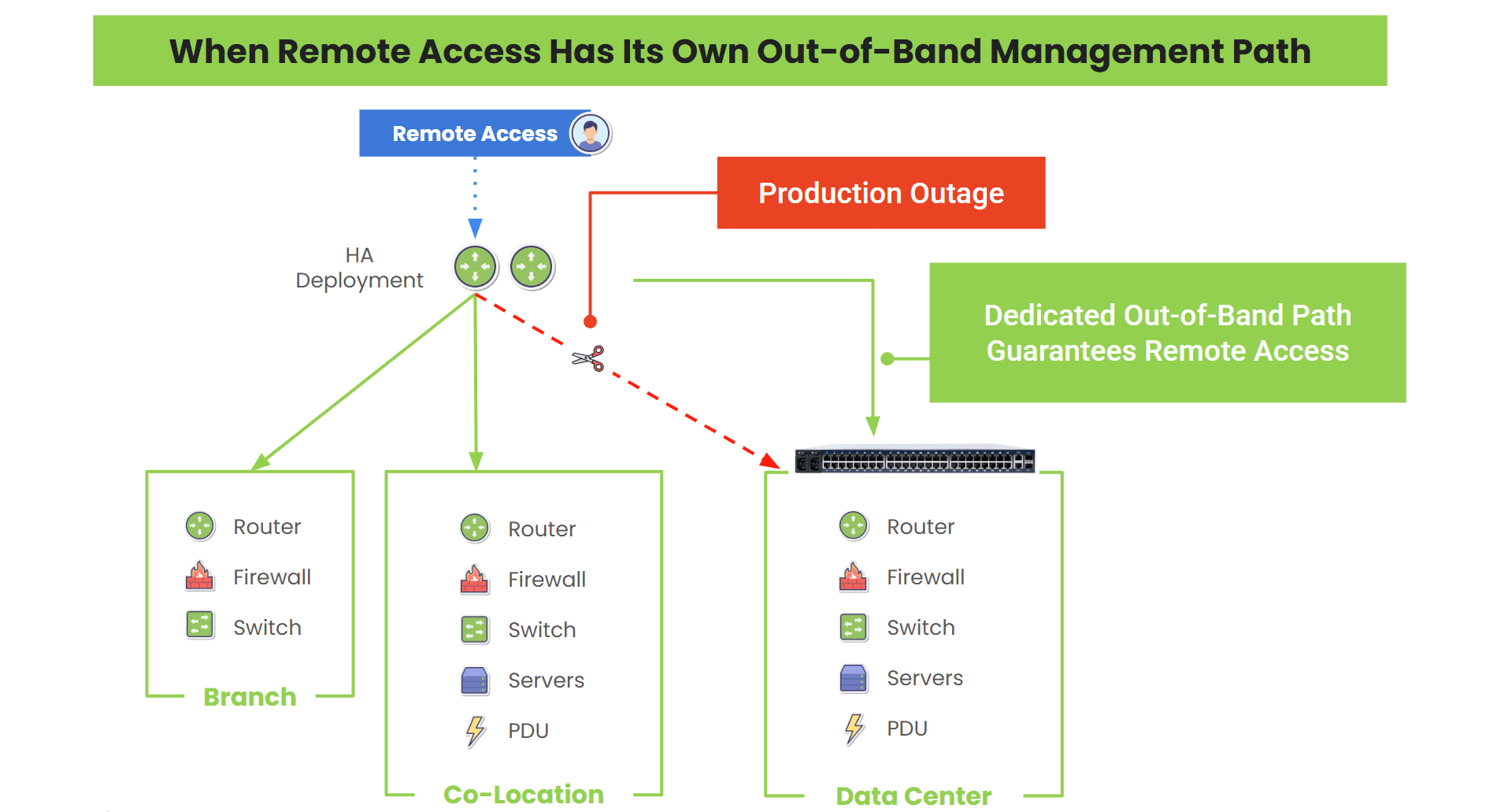

The key is being able to maintain access even when the production network goes down.

This is where out-of-band (OOB) and isolated management infrastructure (IMI) come into play. These create a completely separate remote access path that remains available no matter what kind of outages happen on the production network.

Image: A dedicated out-of-band management path ensures engineers can remotely access their infrastructure, even when there’s a complete outage on the production network.

What Can Engineers Do With Out-of-Band?

Modern OOB and IMI setups allow engineers to see what’s going on and act, no matter what’s happening on the production network.

This dedicated management path means MSP teams can:

- Access device consoles directly, even if routing is broken

- Perform config rollbacks on routers and firewalls after failed changes

- Power-cycle/reboot equipment remotely (no on-site help needed)

- Troubleshoot WAN failures from inside the network

- Maintain access to infrastructure during ISP outages or authentication failures

Outages that would normally drag on for hours can now be resolved in minutes from the NOC. Check out our demonstration video to see what this looks like in action!

Calculate the Impact of MSP Network Failures

The most important question to ask is: can your engineers still reach the infrastructure when the network itself is down?

If the answer is no, it’s time to calculate how much these failure scenarios are costing in truck rolls, labor, and SLA penalties.

Use the MSP Downtime Cost Worksheet to quantify your exposure and see how much faster recovery could improve your margins.