3 Data Center Management Challenges—and How to Solve Them for Good

Data center infrastructure adds an extra layer of complexity to enterprise networks since you need to remotely manage hardware at scale. The network perimeter needs to extend across a geographical distance—which could be several miles or several continents—while maintaining your enterprise infrastructure’s visibility, security, performance, and availability. Accomplishing these goals is often a challenge for engineers.

However, every challenge is an opportunity to learn and improve. Here are the top three data center management challenges, and the proper solutions to avoid or overcome them for good, while optimizing your network infrastructure.

Top 3 data center management challenges and their solutions

The challenge: Infrastructure monitoring and visibility

One of the most significant pain points for network engineers managing data center infrastructure is the difficulty of gaining complete, real-time monitoring and visibility of remote systems.

Different vendors may offer varying degrees of remote monitoring for their devices, but managing a patchwork of monitoring tools is time-consuming and can leave gaps in coverage. Even a minor issue with critical data center infrastructure can balloon into an enterprise-wide catastrophe if it’s left unnoticed for too long. For example, a database server generating redundant or unnecessary logs seems like no big deal at first. However, if those logs accumulate so much that the hard drive fills up and database operations fail, it could impact your enterprise applications, financial systems, and more. Because of this, it is essential to make sure nothing falls between the cracks.

The solution: Data center infrastructure management solutions

As the name suggests, a data center infrastructure management (DCIM) solution provides a centralized platform for managing data center infrastructure. DCIM is essentially a bird’s-eye view of physical and digital assets to track network traffic loads, hardware and VM performance, power usage, and environmental conditions in the data center in real-time.

Additionally, DCIM consolidates all the data center devices under one management UI, allowing you to monitor and administer all systems in the same place efficiently. DCIM provides complete visibility of your data center infrastructure while simplifying and streamlining data center management for IT teams. To fully reap the benefits of DCIM, you should look for a solution that can seamlessly integrate both digital and physical assets, to have full visibility on your cloud-based and hardware-based infrastructure.

The challenge: Data center network security

Just because the critical infrastructure is hosted in a secure data center doesn’t mean it is entirely safe from cyberattacks. Another common data center management challenge involves maintaining enterprise security policies and controls across one or more colocation sites. This can be especially difficult if you utilize managed services and provide access to data center employees or third-party vendors.

According to a recent study, 74% of organizations who reported a breach say it resulted from giving third parties too much privileged access. You need a way to verify the identity and trustworthiness of any account trying to access data center resources, as well as apply enterprise security policies consistently across all your remote infrastructure.

The solution: Zero trust security with identity and access management (IAM)

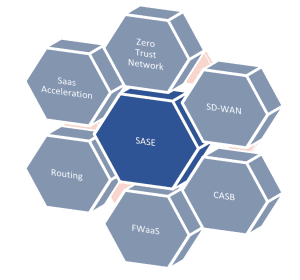

The zero trust security methodology forces enterprises to rethink their approach to trust and authentication in their IT environment. Instead of operating under the assumption that all authorized users are trustworthy, zero trust assumes that every user, device, and application is unsafe until proven otherwise. Zero trust security uses identity and access management (IAM) solutions to verify identities, apply enterprise security policies, and restrict access to only the systems that are necessary for the task at hand.

Zero trust security provides the framework to establish better data center network security through tighter security controls and precise security policies. An IAM solution is one of the key security controls that allow you to dynamically assess the identity and trustworthiness of data center staff, third-party vendors, and any other users or systems that access your data center resources. In that way, zero trust with IAM helps keep data center infrastructure secure.

The challenge: Performance and availability

Your ultimate data center management goal is to maintain the data center infrastructure’s high performance and availability so your organization can run as efficiently as possible. That can be incredibly challenging when managing geographically diverse data centers without local staff or managed services at every location. If a critical switch goes down at a data center 3,000 miles away, you need to get it back up and running fast—which means you don’t have time to fly an engineer to the other side of the country to get eyes on the problem.

The solution: Out-of-band management

Out-of-band (OOB) management separates the production network from the management plane, enabling you to remotely troubleshoot, monitor, and administer your data center infrastructure without needing a LAN or ISP connection. For example, using a separate network via 4G LTE cellular connection, you can reach routers, switches, and servers even without an IP address. Use OOB management to perform higher-level remote access and control tasks on multiple devices from one pane of glass. That means you can reboot devices, perform health checks, and troubleshoot connection problems remotely from anywhere in the world at any time. That’s how out-of-band management improves data center performance and availability.

Solving data center management challenges with the right solutions

Three of the biggest data center management challenges involve monitoring and securing your infrastructure while ensuring high performance and maximum availability. To overcome these challenges, you should invest in tools that provide data center infrastructure management (DCIM), zero trust security and identity and access management (IAM), and out-of-band (OOB) network management solutions like ZPE Systems’ Nodegrid.

Nodegrid is a vendor-neutral platform of hardware and software solutions to overcome your data center management challenges. Nodegrid’s serial consoles and management interface monitor and administer all your critical data center infrastructure and physical assets behind one pane of glass. Nodegrid also provides out-of-band management solutions to troubleshoot your network from anywhere in the world, even during an outage. Plus, you can use Nodegrid’s Zero Trust Security Framework to integrate zero trust principles and IAM providers with your data center management solutions.

To learn more about how ZPE Nodegrid can help you overcome the top data center management challenges, contact us today!

Learn more about how ZPE Nodegrid can help you overcome the top data center management challenges.

Contact us today!

This decentralization means an increased reliance on programs found in the cloud, as it offers the most convenient access for employees working from home. This is the

This decentralization means an increased reliance on programs found in the cloud, as it offers the most convenient access for employees working from home. This is the