What Is Edge Computing for Machine Learning?

What is edge computing?

For many modern enterprises, most of their data is no longer generated in a centralized data center or office building. These days, that data comes from IoT devices, “smart” industrial systems, and other remote locations around the globe. Transferring all that data to and from a central data center for processing can introduce latency and negatively impact performance. Transmitting sensitive data over the internet also increases the risk of interception by hackers.

Edge computing moves computational power closer to the source of data so that data doesn’t need to be sent to a separate location for processing. The benefit of edge computing is that data doesn’t need to travel as far, which translates to less latency and improved application performance. Plus, the data stays behind the firewall on the local network, reducing security risks.

What is edge computing for machine learning?

Machine learning (ML) is powered by data, and with data moving to the edge of enterprise networks, machine learning needs to decentralize as well. Edge computing for machine learning places ML applications closer to remote sources of data. The benefits of edge computing for machine learning are the same for edge computing in general, just supercharged.

Machine learning requires data to make intelligent predictions and decisions. In many cases, that data originates from the edge of the network. For example, the healthcare industry uses ML algorithms to analyze health data from smart devices in hospitals and clinics around the world, sometimes in hard-to-reach and politically unstable regions.

Getting patient health data from these remote facilities back to a centralized data center for machine learning processing can be very challenging, especially if the internet infrastructure is outdated or inconsistent. In addition, this data is personal and sensitive, and healthcare organizations are obligated to ensure its protection, so transferring it over uncertain internet connections is too risky.

Instead, organizations can install the ML algorithm on servers in each remote facility, or even on the smart devices themselves. This drastically reduces their reliance on outside network infrastructure for running machine learning workloads, which improves performance and ensures patient health data stays private.

How to deploy edge computing for machine learning

There are two basic deployment models for machine learning at the edge.

A traditional edge machine learning deployment uses one or more racks of heavy-duty servers with high-performance machine learning processing units. This deployment model is best suited to large ML workloads that process massive amounts of edge data.

A “thin” or “nano” edge machine learning deployment runs on smaller servers or multi-purpose devices that share rack space with other edge infrastructure. This deployment model is more cost-effective and works best for smaller ML workloads in buildings where space is limited.

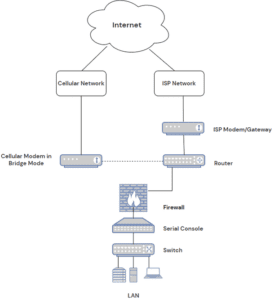

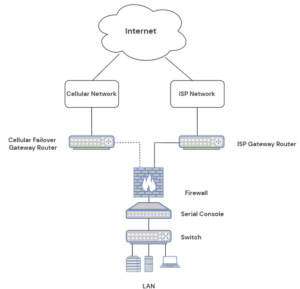

For either deployment model, you need a solution in place for remote management so administrators can maintain and troubleshoot edge infrastructure without traveling on-site. The best way to ensure reliable management access is through out-of-band (OOB) management. OOB management creates a separate network dedicated to remote management and troubleshooting, and that provides an alternative path to remote infrastructure (typically via cellular LTE) in case the primary ISP or WAN link goes down.

Through that OOB management network, you can orchestrate workloads, push out security patches, and monitor the health and performance of edge infrastructure.

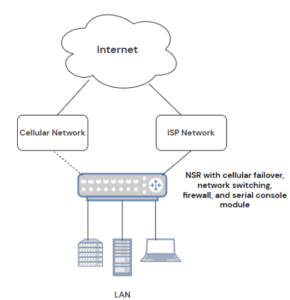

Deploy and manage edge computing machine learning infrastructure with Nodegrid

The Nodegrid Net Services Router (NSR) from ZPE Systems supports both traditional and nano edge computing machine learning deployment models. The NSR is a modular and customizable solution that delivers OOB management, cellular failover, edge routing and switching, and automation in a single device.

You can use the NSR’s serial console modules to monitor, manage, and orchestrate an entire rack of edge machine learning servers. For less intensive workloads, you can use the edge compute module to host ML applications, virtual machines, and Docker images.

Either way, you can take advantage of 5G/4G LTE to ensure fast and reliable OOB access and cellular failover. The NSR is also secured by Zero Trust features like SAML 2.0 integration and BIOS protection to keep edge machine learning data protected.

Ready to learn more about 5G/4G LTE to ensure fast and reliable OOB access?

To learn more about edge computing for machine learning with Nodegrid, contact ZPE Systems today.