Cisco 2900 EOL: Replacement Options

The Cisco ISR 2900 series of branch routers went EOS (end-of-sale) on the 9th of December 2017, and Cisco concluded support on the 31st of December 2022. In this guide, we’ll compare migration options for the Cisco ISR 2900 EOL models to help you select a solution that supports your business use case, deployment size, and future growth.

Disclaimer: This comparison was written by a third party in collaboration with ZPE Systems using data gathered from publicly available data sheets and admin guides, as of 5/12/2023. Please email us if you have corrections or edits, or want to review additional attributes: Matrix@zpesystems.com

| Table of Contents |

Cisco ISR 2900 overview

The Cisco ISR 2900 is a line of enterprise gateway routers designed for branch and edge networking. It’s a modular solution that can be expanded with optional Network Interface Modules (NIMs) and Service Modules (SMs) for more functionality. There are two primary use cases for the 2900:

Converged branch networking – The ISR 2900 easily integrates with Cisco’s SD-WAN, SD-Branch, cloud security, and DNA network management software, can be extended with optional modules for added hardware capabilities, and supports NFV (network functions virtualization) for all-in-one branch networking.

Out-of-band (OOB) management – Using serial port modules, the ISR 2900 turns into an out-of-band (OOB) serial console solution that provides remote management access to the control plane of branch infrastructure.

The ISR 2900 is officially EOL as of the 31st of December 2022. The EOL models include all 2901, 2911, 2921, and 2951 ISR product SKUs.

| Looking for replacement options for your other Cisco ISR EOL products? Read our guide to Cisco ISR EOL Replacement Options. |

Cisco 2900 EOL replacement options

The discontinuation of the Cisco 2900 has left many organizations looking for migration options. Let’s compare two direct replacements from Cisco before discussing alternative options that deliver better branch management capabilities and greater opportunities for automation.

Cisco ISR 1100

Cisco ISR 1100 is a series of enterprise branch routers, though in this comparison we’re only looking at the models that support SD-WAN and thus serve as direct replacements for the discontinued 2900 models. The capabilities of the 1100 series vary, mostly because only some of the models are modular. For example, the fixed form-factor 1100-4G/4G LTE models have cellular functionality but offer fewer networking and security features. Conversely, the 1161X-8P and 112x-8P models are modular and can be extended with optional modules (like cellular for the 1161X or terminal server ports for the 112x-8P).

Even with these expansions, the compact ISR 1100s are best suited for smaller deployments in branch offices or small, provider-managed edge data centers. If your organization uses the ISR 2900 for converged branch networking, the 1100s are the closest Cisco replacement, though it supports OOB serial modules as well.

Cisco Catalyst C8300

The Cisco Catalyst C8300 series is a modular branch and edge networking solution, though due to its large size, it’s sometimes used as a primary on-premises gateway router. There are four models to choose from – two 2RU units with 2 SM and 2 NIM slots, and two 1RU units with 1 SM and 1 NIM slot. Each chassis comes with 6 embedded Layer3 Ethernet ports (1 Gbps and/or 10 Gbps) as well as a console port and USB port. All other port configurations and capabilities come via Cisco expansion modules, including options for 5G/4G cellular.

The Catalyst C8300 is a big, robust solution that’s designed for medium to large deployments such as campuses, colocation sites, and AI/machine learning data centers. The C8300 is primarily a converged branch networking solution like the ISR 1100 series, but it provides OOB management with optional serial cards.

Cisco 2900 replacement option comparison table

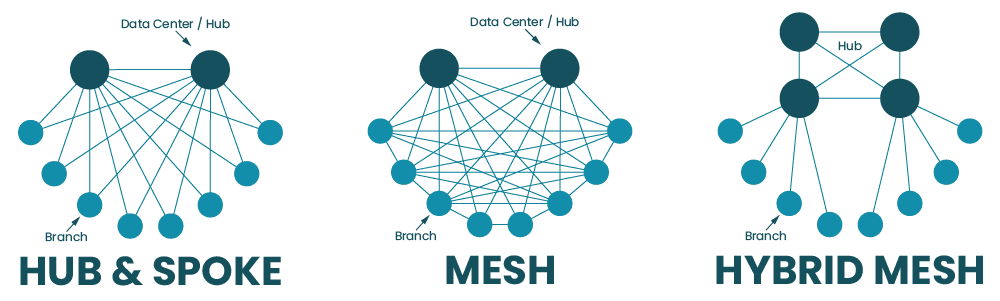

For users looking for a Cisco solution to replace their EOL ISR 2900, the ISR 1100 series and Catalyst C8300 are the closest direct replacements. However, both product lines suffer from a major limitation – they aren’t vendor-neutral.

While Cisco routers integrate with some third-party partners, they do not support custom or third-party applications for automation and orchestration, which limits you to the automation offered by Cisco’s software. This lack of open integrations increases the chances that a Cisco solution won’t be able to hook into all the hardware and software components of a distributed and multi-vendor network architecture.

For example, if you utilize different SD-WAN and next-generation firewall (NGFW) vendors at some of your remote sites, Cisco’s automation may not extend to these devices. That means you’ll need to send out technicians to all remote sites (which could number in the dozens or hundreds) just to set up these services when you otherwise could have deployed them automatically.

| Want to learn more about breaking free of locked ecosystems? Read The Benefits of Vendor Agnostic Platforms in Network Management |

When network solutions like the Cisco 2900 go EOL, it’s the perfect opportunity to look for alternative options that provide the functionality you need without locking you into an ecosystem or limiting your automation capabilities.

Cisco 2900 direct replacement options from ZPE Systems

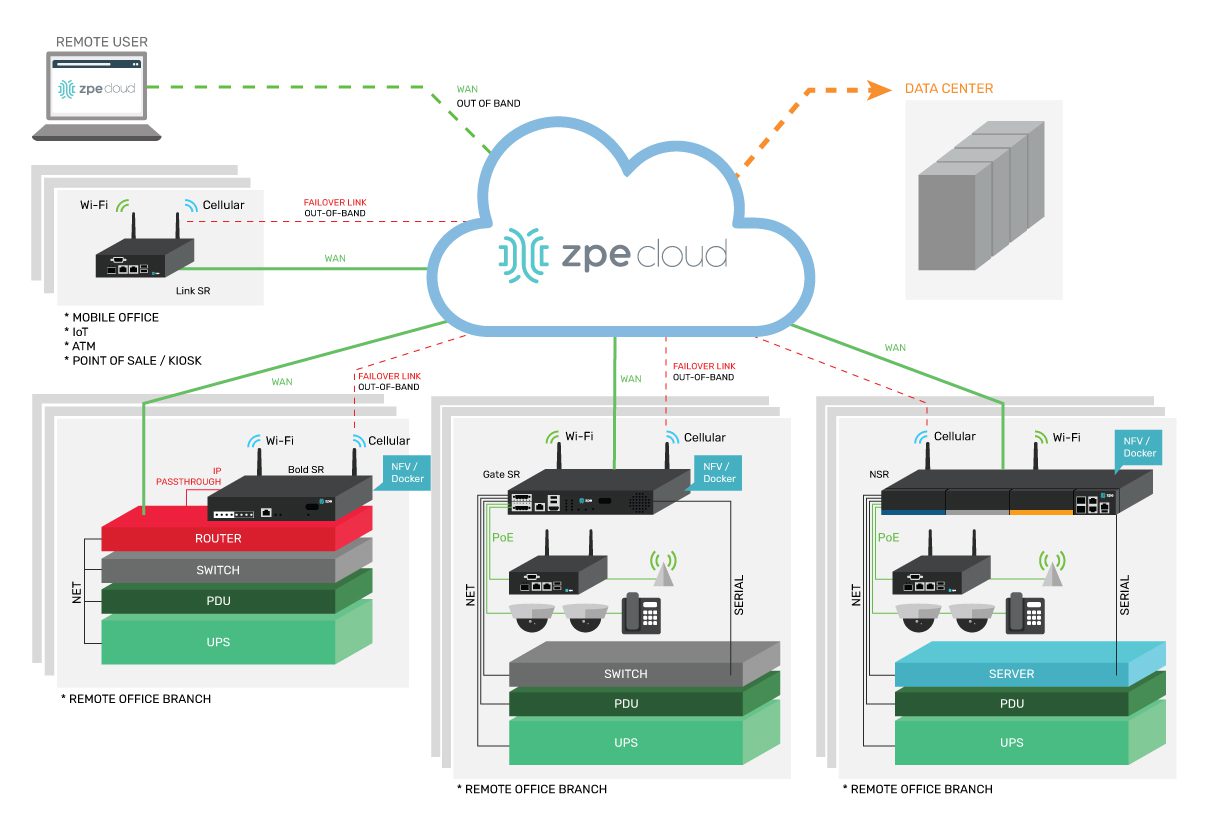

ZPE Systems provides a line of vendor-neutral solutions for branch and edge networking called Nodegrid. The Nodegrid Net Services Router (NSR) and Nodegrid Serial Console Plus (NSCP) serve as direct replacements for Cisco 2900 EOL products.

Nodegrid Net Services Router (NSR)

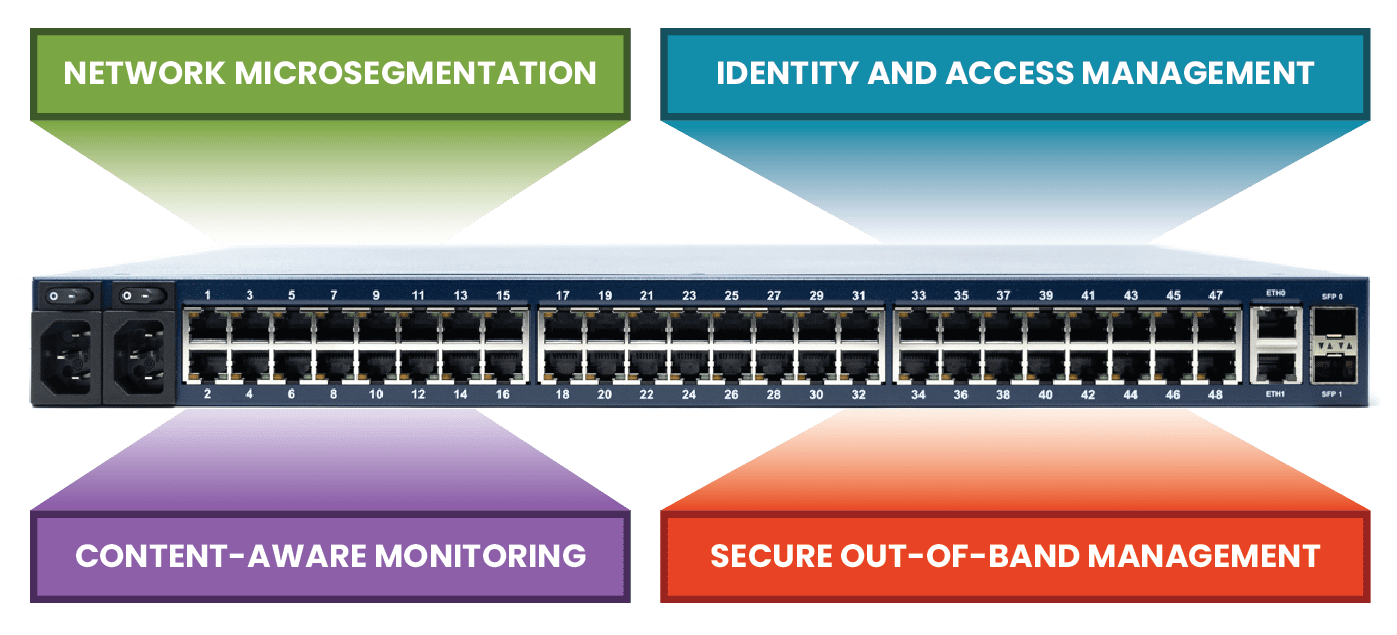

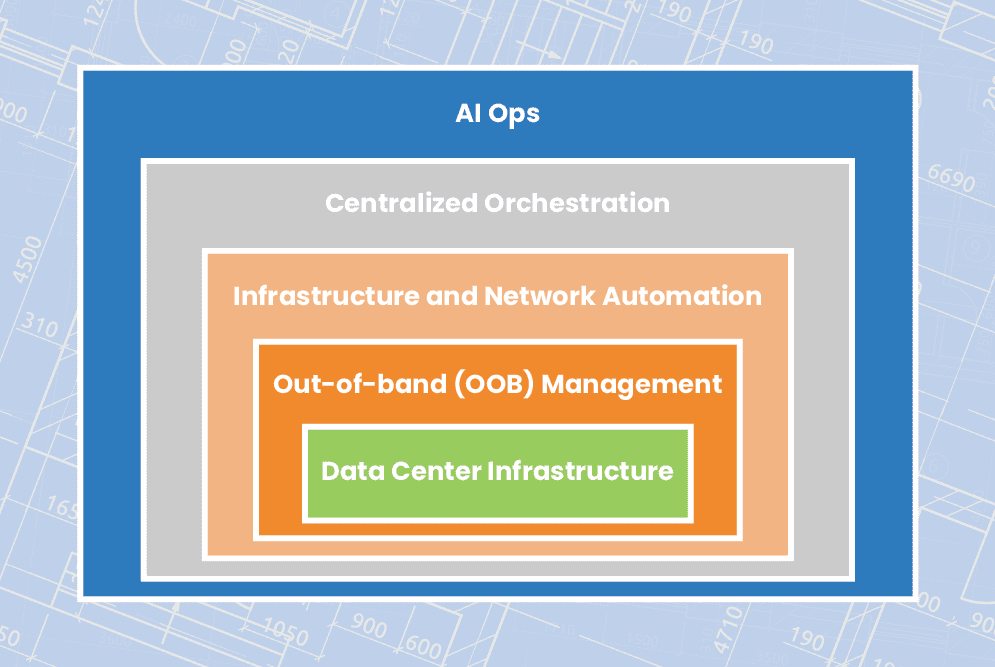

The Nodegrid NSR is a modular branch networking solution that you can customize to increase your terminal server ports, storage space, processing power, or switch ports. The NSR delivers converged branch networking capabilities like SD-WAN, SD-Branch, and NFVs, plus it can host your choice of custom and third-party applications for automation, security, and more.

While the NSR is the perfect converged branch solution to replace the Cisco ISR 2900, it also provides 3rd generation (or Gen 3) OOB management. That means Nodegrid’s OOB network is completely vendor-neutral and can extend automation capabilities to all your legacy and mixed-vendor infrastructure for efficient deployments, management, and orchestration.

| Want to see the Nodegrid converged branch networking solution in action? Watch a Demo |

Nodegrid Serial Console Plus (NSCP)

The NSCP is a robust, scalable branch networking and out-of-band serial console solution. The NSCP comes in 16-, 32-, 48-, and 96-port models, so you can choose the solution that’s right-sized to your deployment and use case. Plus, you can get built-in 5G/4G LTE and Wi-Fi options for failover and out-of-band.

Like the NSR, the NSCP is also an open platform that can run your choice of software to expand your capabilities and reduce your tech stack. Like the NSR, the NSCP delivers Gen 3 OOB management of all connected infrastructure, enabling true end-to-end automation in data centers, branches, and other remote sites. The NSCP is the perfect replacement for enterprises utilizing the Cisco 2900 for out-of-band management, though it also provides converged branch networking capabilities at any scale.

All Nodegrid devices run the open, Linux-based Nodegrid OS which can host your choice of third-party or custom applications, freeing you from vendor lock-in. You can even integrate infrastructure orchestration tools like Puppet, Chef, and Ansible to extend automation to end devices, regardless of vendor. This is what makes Nodegrid the world’s first Gen 3 branch networking solution.

| Want to see how Nodegrid stacks up against Cisco’s replacement options? Click here to download the services routers comparative matrix. |

Global support and supply chain

Leaving a trusted ecosystem behind to adopt alternative options can be risky, so it’s important to find a vendor that offers the support you need to make the transition and keep your operations running smoothly. ZPE Systems offers global product support using the “follow the sun” model, which means you get support when you need it, regardless of your timezone. You also won’t have to worry about supply chain issues causing stock shortages – ZPE supplies hyperscalers in 10K+ units per quarter and has great, consistent supply chain control.

Need to replace your Cisco 2900 EOL?

To learn more about replacing your Cisco 2900 EOL solution with the vendor-neutral Nodegrid platform and our shipping in as little as two weeks, contact ZPE Systems today. Contact Us

Cisco 2900 EOL product tables with migration SKUs

|

Want to see how Nodegrid compares to other serial console solutions? |