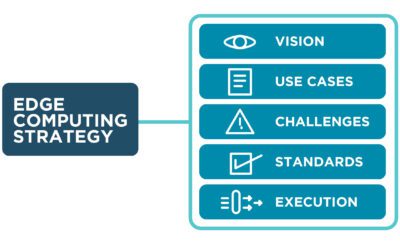

Discussing the Gartner Market Guide for Edge Computing and their recommendations for building a strategy to manage and orchestrate your edge solutions.

Month: December 2023

Exploring ZPE Cloud – Tech Talk Tuesday from ZPE Systems

Todd Atherton (Channel Sales Director) and Marc Westberg (Channel Sales Engineer) walk you through the benefits of using ZPE Cloud. This fleet management solution offers centralized access and control over your global devices, and enables true zero-touch provisioning via #cloud to eliminate the hassle and risk of pre-staging equipment.

The Industry’s Most Secure Network Management – Tech Talk Tuesday from ZPE Systems

ZPE Systems’ Nodegrid OS and ZPE Cloud have been validated by Synopsys as the industry’s most secure network management platform.

How Many Types of Managed Devices?! – Tech Talk Tuesday from ZPE Systems

Forget everything you know about serial consoles and out-of-band management. Todd Atherton (Channel Sales Director) and Marc Westberg (Channel Sales Engineer) cover the many types of devices you can manage using ZPE Systems’ Nodegrid. You get a versatile and robust platform for centralized management.

What is a Hyperscale Data Center?

This blog defines a hyperscale data center deployment before discussing the unique challenges involved in managing and supporting such an architecture.

Cellular Functionality – Tech Talk Tuesday from ZPE Systems

Todd Atherton (Channel Sales Director) and Marc Westberg (Channel Sales Engineer) show you all the functionalities of ZPE Systems’ cellular connectivity. Using dual- and quad-SIM capabilities, you can get the freedom and reliability of cellular for any use case.