A supply chain attack is when cybercriminals breach your network by compromising an outside vendor or partner. Often, these attacks exploit a weak link in your trusted ecosystem of third-party...

Month: May 2022

Why You Need a Next-Gen OOB Console Server

An OOB (out-of-band) console server is a fundamental data center tool that allows you to view, manage, and troubleshoot critical remote infrastructure on a dedicated network connection. While the...

Network Disaster Recovery Plan Checklist

Your organization may feel secure now, but a disaster could occur at any moment. For example, the war in Ukraine took the world by surprise and left many organizations scrambling to protect and...

What does 2001: A Space Odyssey have to do with network automation?

“I’m sorry, Dave, I’m afraid I can’t do that.”Those nine words couldn’t possibly be related to network automation. Or could they? Uttered famously by fictional supercomputer HAL 9000 in the 1968...

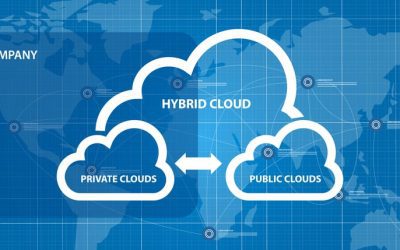

Orchestrating Hybrid Network Environments: Challenges, Solutions, and Best Practices

A hybrid network environment combines infrastructure from a public cloud with a private cloud and/or on-premises deployment. Your compute, storage, and service resources are distributed across...

Hyperautomation vs Automation: How Are They Different?

Automation can help you streamline network management in your enterprise by reducing human error, speeding up processes, and facilitating NetDevOps. Hyperautomation takes things a step further by...

How to Choose Secure Out-of-Band Management

Out-of-band access gives you an alternative path to manage your critical remote infrastructure at data centers, branch offices, and other distributed locations. However, that management link creates...